What Does a Responsible AI Framework Look Like for Canadian Companies in 2026?

A responsible AI framework for Canadian companies covers five pillars: AI system inventory, bias testing and fairness, documentation and transparency, human oversight mechanisms, and continuous monitoring. Bill C-27's Artificial Intelligence and Data Act (AIDA) introduces legal requirements for high-impact AI systems operating in Canada — including risk assessments, record-keeping, and harm mitigation measures. Companies deploying AI in hiring, credit decisions, healthcare, or safety-critical applications must implement governance structures now, before enforcement begins.

Talk to our AI governance team about building your framework.

Bill C-27 and AIDA: What You Need to Know

The Artificial Intelligence and Data Act (AIDA), part of Bill C-27, establishes Canada's first federal AI regulation:

- Scope: Applies to "high-impact" AI systems — those that can affect health, safety, human rights, or economic interests

- Requirements: Risk assessments, bias mitigation, transparency measures, record-keeping

- Penalties: Up to $10M or 3% of global revenue for non-compliance

- Timeline: Expected to come into force 2026-2027 with a transition period

High-impact AI systems include: automated hiring tools, credit scoring, medical diagnosis, predictive policing, and any AI making decisions that significantly affect individuals.

The Five Governance Pillars

1. AI System Inventory. Document every AI system your organisation uses or deploys. Include: purpose, data sources, decision scope, affected populations, risk level. Most Canadian organisations discover they have 3-5x more AI systems than they realised.

2. Bias Testing and Fairness. Test models for demographic bias across protected characteristics (Canadian Human Rights Act). Use statistical parity, equalised odds, and calibration metrics. Retest after every model update.

3. Documentation and Transparency. Maintain model cards for every production AI system: architecture, training data, performance metrics, known limitations. Users must understand when they are interacting with AI and how it affects decisions about them.

4. Human Oversight. Define escalation paths for AI decisions. Not every decision needs human review, but high-stakes decisions (hiring, credit, safety) require human-in-the-loop or human-on-the-loop oversight.

5. Continuous Monitoring. Track model performance, drift, fairness metrics, and incident reports in production. Automated alerts when metrics deviate from thresholds.

PIPEDA Intersection

AI systems processing personal information must comply with PIPEDA:

- Consent: Individuals must consent to automated decision-making about them

- Explanation: Individuals have the right to understand how automated decisions were made

- Access: Individuals can request access to their personal data used by AI systems

- Challenge: Individuals can challenge automated decisions and request human review

90-Day Implementation Roadmap

Days 1-30: Inventory all AI systems. Classify by risk level. Identify high-impact systems requiring immediate governance.

Days 31-60: Implement bias testing for high-impact systems. Create model cards. Establish human oversight procedures. Draft your AI governance policy.

Days 61-90: Deploy monitoring dashboards. Train staff on governance procedures. Conduct first governance review. Document everything for regulatory readiness.

Frequently Asked Questions

Does AIDA apply to my company if I only use third-party AI tools?

Yes. If you deploy AI systems that make high-impact decisions — even if the AI was built by a third party — you bear responsibility for governance, bias testing, and oversight. Using a vendor's AI does not transfer your compliance obligations.

What qualifies as a "high-impact" AI system under AIDA?

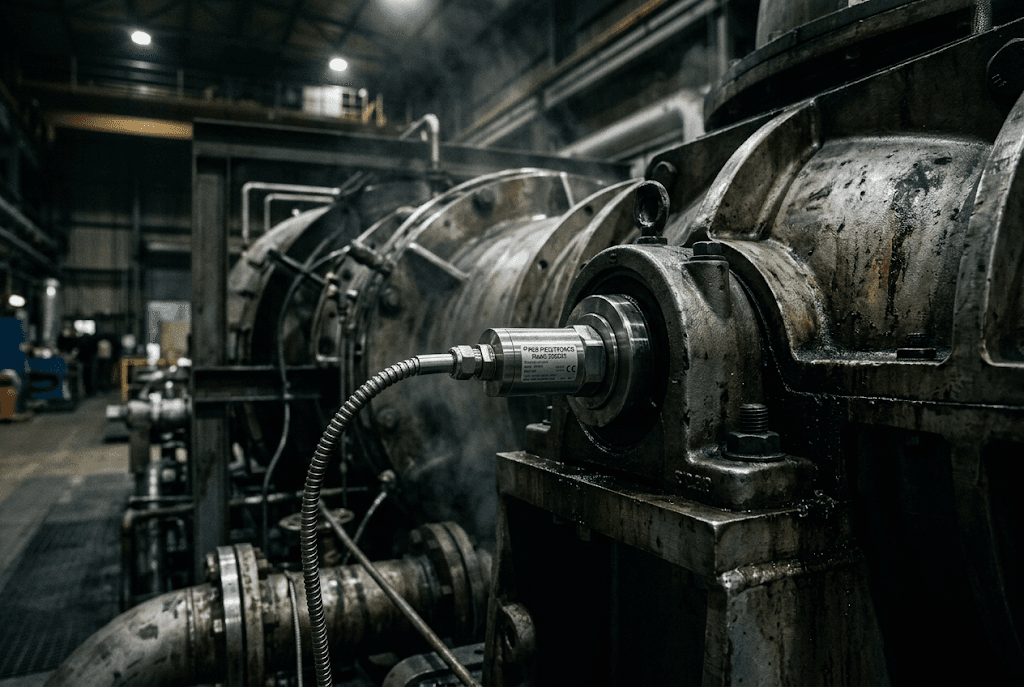

The legislation defines high-impact systems as those that could cause significant harm to individuals. Practical examples: AI-powered hiring screening, automated credit decisions, medical triage, safety monitoring systems, and predictive maintenance systems that affect worker safety.

How much does an AI governance programme cost?

Initial implementation: CAD $50,000-$150,000 depending on the number of AI systems and complexity. Ongoing governance (monitoring, testing, documentation): CAD $30,000-$80,000/year. Compare this against potential AIDA penalties of $10M+ for non-compliance.

Droz Technologies helps Canadian companies build responsible AI frameworks. Talk to our governance team about your AI compliance requirements.

Ready to apply this?